MongoDB slice

Fragmentation

There is another cluster Mongodb within, slicing technology, a large amount of data to meet the growing demand for MongoDB.

When MongoDB to store vast amounts of data, a machine may be insufficient to store the data, may be insufficient to provide an acceptable read and write throughput. At this point, we can split the data on multiple machines, so that the database system can store and process more data.

Why slice

- Copy all write operations to the primary node

- Delay sensitive data in the master query

- A single copy set is limited to 12 nodes

- When the huge volume of requests will appear when the memory.

- Local disk shortage

- Vertical expansion is expensive

MongoDB slice

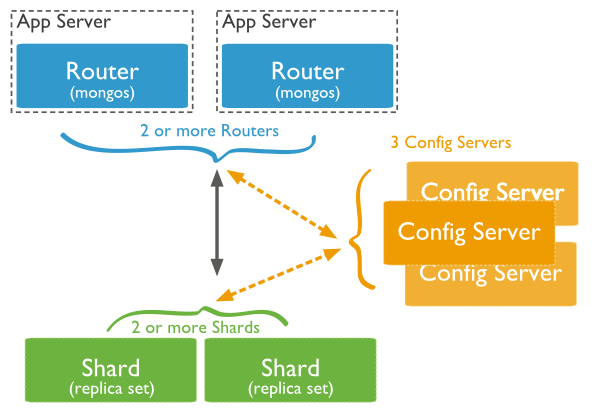

The following figure shows the use of cluster structure slice in MongoDB distribution:

The image above has the following three main components:

- Shard:

Used to store the actual data block, the actual production environment, a shard server role can set a few machines relica set a commitment to prevent the host single point of failure

- Config Server:

mongod instance, stores the entire ClusterMetadata, including the chunk information.

- Query Routers:

The front end of the route, whereby the client access, and the whole cluster look like a single database front-end applications can transparently use.

Examples of fragmentation

Slice fabric ports are distributed as follows:

Shard Server 1:27020 Shard Server 2:27021 Shard Server 3:27022 Shard Server 4:27023 Config Server :27100 Route Process:40000

Step one: Start Shard Server

[root@100 /]# mkdir -p /www/mongoDB/shard/s0 [root@100 /]# mkdir -p /www/mongoDB/shard/s1 [root@100 /]# mkdir -p /www/mongoDB/shard/s2 [root@100 /]# mkdir -p /www/mongoDB/shard/s3 [root@100 /]# mkdir -p /www/mongoDB/shard/log [root@100 /]# /usr/local/mongoDB/bin/mongod --port 27020 --dbpath=/www/mongoDB/shard/s0 --logpath=/www/mongoDB/shard/log/s0.log --logappend --fork .... [root@100 /]# /usr/local/mongoDB/bin/mongod --port 27023 --dbpath=/www/mongoDB/shard/s3 --logpath=/www/mongoDB/shard/log/s3.log --logappend --fork

Step Two: Start the Config Server

[root@100 /]# mkdir -p /www/mongoDB/shard/config [root@100 /]# /usr/local/mongoDB/bin/mongod --port 27100 --dbpath=/www/mongoDB/shard/config --logpath=/www/mongoDB/shard/log/config.log --logappend --fork

Note: Here we can start like ordinary mongodb service as start, no need to add -shardsvr and configsvr parameters. Because the role of these two parameters is to change the starting port, so we can be self-designated port.

Step Three: Start Route Process

/usr/local/mongoDB/bin/mongos --port 40000 --configdb localhost:27100 --fork --logpath=/www/mongoDB/shard/log/route.log --chunkSize 500

mongos startup parameters, chunkSize this one is used to specify the size of the chunk, the unit is MB, the default size is 200MB.

Step Four: Configure Sharding

Next, we use MongoDB Shell Log on to mongos, add nodes Shard

[root@100 shard]# /usr/local/mongoDB/bin/mongo admin --port 40000

MongoDB shell version: 2.0.7

connecting to: 127.0.0.1:40000/admin

mongos> db.runCommand({ addshard:"localhost:27020" })

{ "shardAdded" : "shard0000", "ok" : 1 }

......

mongos> db.runCommand({ addshard:"localhost:27029" })

{ "shardAdded" : "shard0009", "ok" : 1 }

mongos> db.runCommand({ enablesharding:"test" }) #设置分片存储的数据库

{ "ok" : 1 }

mongos> db.runCommand({ shardcollection: "test.log", key: { id:1,time:1}})

{ "collectionsharded" : "test.log", "ok" : 1 }

Step Five: In the program code without much change, a direct connection in accordance with the ordinary mongo database as the database access interface to connect 40,000